15-Day n8n Mastery for ML Engineers: From Zero to Production Hero

15-Day n8n Challenge: Automate Your ML Stack!

Still wrestling with brittle pipelines and manual scripts? In just 15 days, transform into an n8n wizard—building bulletproof MLOps workflows, AI agents, and production-grade automations that top teams pay six figures for. Don’t get left behind while everyone else accelerates development with visual code power. Hit play now and own your ML infrastructure!

Dive into our 15-day n8n Mastery Plan designed explicitly for Machine Learning Engineers. In this action-packed series, you’ll:

Days 1–5: Solidify Your Foundation

• Understand n8n’s core architecture—workflows, nodes, executions, and credentials

• Spin up a production-ready Docker + PostgreSQL setup from day one

• Master triggers (Webhooks, Schedules) and secure credential managementDays 6–10: Supercharge with Code & AI

• Inject custom JavaScript and Python into your workflows for no-code tasks and advanced data transforms

• Unleash n8n’s native AI stack: build agents, connect LLMs, and spin up RAG pipelines with vector stores

• Automate model training, inference, and monitoring with real-world MLOps patternsDays 11–15: Elevate to Production-Grade

• Scale horizontally with queue mode, Redis brokers, and worker clusters

• Lock down security with SSL/TLS proxies, secret management, and RBAC

• Version-control everything—GitOps for workflows, CI/CD deployments, and custom node development

By Day 15, you’ll deliver a capstone: a fully automated training-to-deployment loop that validates model performance, gates releases, and notifies your team automatically. Whether you’re a solo ML dev or part of a fast-moving AI team, this roadmap arms you with the skills to automate, orchestrate, and scale—fast. Hit Play and start your n8n journey today!

#n8n #MLOps #MachineLearning #Automation #AIWorkflow #n8nTutorial #DataEngineering #AIStack #ProductionML #15DayChallenge

The 15-Day PyTorch Deep Learning Engineer's Handbook: From Fundamentals to Expert Proficiency

Part I: The Bedrock of PyTorch (Days 1-3)

This initial phase focuses on building an unshakable foundation. A true expert understands the "why" behind the tools, not just the "how." The following three days are dedicated to deconstructing PyTorch into its core components, ensuring the development of a deep intuition for its design, philosophy, and operational mechanics. This foundation is paramount for tackling the advanced architectures and engineering challenges in the subsequent sections.

Day 1: Tensors and Computational Foundations

Objective: To achieve mastery over torch.Tensor, the central data structure in PyTorch. By the end of this day, the learner will understand its properties, operations, and its critical role in enabling GPU-accelerated numerical computation, which is the cornerstone of modern deep learning.

Theoretical Knowledge: The Essence of Tensors

At the very core of PyTorch lies the tensor. Conceptually, a tensor is a multi-dimensional array, serving as the fundamental building block for all data, from inputs and outputs to the model's internal parameters. While this sounds similar to the

ndarray object in NumPy, a widely used Python library for scientific computing, PyTorch tensors possess two transformative capabilities that set them apart: native support for Graphics Processing Unit (GPU) acceleration and seamless integration with an automatic differentiation engine called autograd. This allows for massive parallel processing and the automatic computation of gradients, which are essential for training neural networks.

The deliberate mirroring of NumPy's API in PyTorch is a strategic design choice. It significantly lowers the barrier to entry for the vast community of data scientists and researchers already proficient in the NumPy ecosystem, allowing them to leverage existing knowledge rather than learning a completely new syntax. This focus on interoperability is further reinforced by the zero-copy "NumPy Bridge," a performance feature that allows seamless integration into existing data science pipelines that rely on libraries like pandas and Scikit-learn. This design signals that the framework is built to be a component within a larger ecosystem, not a walled garden. An expert engineer leverages this by preprocessing complex data with familiar tools before efficiently converting to PyTorch tensors for model training.

When working with tensors, three attributes are of critical importance and must always be considered :

shape: This attribute describes the dimensions of the tensor. For example, a shape oftorch.Size()represents a matrix with 2 rows and 3 columns. Understanding and managing tensor shapes is paramount, as mismatched shapes are the most common source of errors in deep learning code. Operations like matrix multiplication have strict rules regarding the shapes of the tensors involved.dtype: This attribute specifies the data type of the elements within the tensor, such astorch.float32ortorch.int64. For deep learning, the default and most common data type istorch.float32(32-bit floating-point), as it provides a good balance between precision and memory footprint, which is crucial for the gradient calculations during training.device: This attribute indicates the hardware on which the tensor's data is stored, typically thecpuor acudadevice (for NVIDIA GPUs). A fundamental rule in PyTorch is that operations between two or more tensors can only be performed if they all reside on the same device. Attempting to combine a CPU tensor with a GPU tensor will result in a runtime error.

PyTorch provides a flexible and comprehensive API for creating tensors in various ways :

From existing data, like Python lists or NumPy arrays, using

torch.tensor().With specific sizes but uninitialized values using

torch.empty().With specific sizes and filled with constant values, such as

torch.zeros()ortorch.ones().With specific sizes and filled with random numbers sampled from a distribution, like

torch.rand()(uniform distribution) ortorch.randn()(normal distribution).As a sequence of numbers within a range, using

torch.arange().

Hands-On Implementation: Tensor Manipulation

The following code provides a practical, hands-on tour of tensor creation and manipulation. It is designed to be run in a Python environment like a Jupyter Notebook or Google Colab.

Set up First, import the library and check its version.

Python

import torch

print(torch.__version__)

Creating Tensors Practice creating tensors of different dimensions (or ranks) and inspect their key attributes.

Python

# Scalar (0-dimensional tensor)

scalar = torch.tensor(7)

print(f"Scalar: {scalar}")

print(f"Dimensions: {scalar.ndim}")

print(f"Shape: {scalar.shape}")

print(f"Python value: {scalar.item()}") #.item() extracts the value from a single-element tensor

# Vector (1-dimensional tensor)

vector = torch.tensor()

print(f"\nVector: {vector}")

print(f"Dimensions: {vector.ndim}")

print(f"Shape: {vector.shape}")

# Matrix (2-dimensional tensor)

MATRIX = torch.tensor([, ])

print(f"\nMatrix:\n{MATRIX}")

print(f"Dimensions: {MATRIX.ndim}")

print(f"Shape: {MATRIX.shape}")

# TENSOR (3-dimensional tensor)

TENSOR = torch.tensor([[, ]])

print(f"\nTensor:\n{TENSOR}")

print(f"Dimensions: {TENSOR.ndim}")

print(f"Shape: {TENSOR.shape}")

# Creating random tensors

random_tensor = torch.rand(size=(3, 4))

print(f"\nRandom Tensor:\n{random_tensor}")

print(f"Data type: {random_tensor.dtype}")

Tensor Operations: Mastering tensor operations is crucial for building neural networks.

Python

# Element-wise multiplication

tensor = torch.tensor()

print(f"\nElement-wise product: {tensor * tensor}")

# Matrix multiplication

mat_mul_result = torch.matmul(MATRIX, MATRIX)

print(f"\nMatrix multiplication:\n{mat_mul_result}")

# The '@' operator is a convenient shorthand for matrix multiplication

print(f"\nMatrix multiplication with '@':\n{MATRIX @ MATRIX}")

Tensor Reshaping, Stacking, Squeezing, and Unsqueezing. Manipulating tensor dimensions is a daily task for a deep learning engineer.

Python

x = torch.arange(1., 10.)

print(f"\nOriginal tensor: {x}, shape: {x.shape}")

# Reshape

x_reshaped = x.reshape(1, 9)

print(f"Reshaped tensor: {x_reshaped}, shape: {x_reshaped.shape}")

# Squeeze - removes all dimensions of size 1

x_squeezed = x_reshaped.squeeze()

print(f"Squeezed tensor: {x_squeezed}, shape: {x_squeezed.shape}")

# Unsqueeze - adds a dimension of size 1 at a specific position

x_unsqueezed = x_squeezed.unsqueeze(dim=0)

print(f"Unsquuezed tensor: {x_unsqueezed}, shape: {x_unsqueezed.shape}")

CPU/GPU Interaction: Efficiently managing data across devices is key to performance.

Python

# Check for GPU availability

device = "cuda" if torch.cuda.is_available() else "cpu"

print(f"\nUsing device: {device}")

# Create a tensor on the CPU

tensor_cpu = torch.tensor(, device="cpu")

print(f"Tensor on CPU: {tensor_cpu}, Device: {tensor_cpu.device}")

# Move the tensor to the GPU (if available)

if device == "cuda":

tensor_gpu = tensor_cpu.to(device)

print(f"Tensor on GPU: {tensor_gpu}, Device: {tensor_gpu.device}")

# Trying to operate on tensors on different devices will cause an error

try:

tensor_cpu + tensor_gpu

except RuntimeError as e:

print(f"\nError caught as expected: {e}")

NumPy Bridge The seamless, zero-copy conversion between PyTorch CPU tensors and NumPy arrays is a powerful feature for integrating with the broader Python data science ecosystem.

Python

import numpy as np

# Convert NumPy array to PyTorch tensor

numpy_array = np.array([1.0, 2.0, 3.0])

tensor_from_numpy = torch.from_numpy(numpy_array)

print(f"\nTensor from NumPy: {tensor_from_numpy}, dtype: {tensor_from_numpy.dtype}")

# Convert PyTorch tensor to NumPy array

tensor = torch.ones(3)

numpy_from_tensor = tensor.numpy()

print(f"NumPy from Tensor: {numpy_from_tensor}, type: {type(numpy_from_tensor)}")

# The memory is shared: changing the numpy array will change the tensor

numpy_array = 100

print(f"Original NumPy array changed: {numpy_array}")

print(f"Tensor from NumPy also changed: {tensor_from_numpy}")

Day 2: The Engine of Learning: Autograd and Backpropagation

Objective: To demystify torch.autograd, PyTorch's automatic differentiation engine. This section will explain how it constructs computational graphs and automatically computes the gradients necessary for training neural networks, forming the mathematical backbone of the learning process.

Theoretical Knowledge: How PyTorch Learns

The magic of how a neural network learns from data is powered by two interconnected concepts: backpropagation and automatic differentiation. PyTorch's autograd engine is a masterful implementation of the latter.

At its core, autograd works by building a Directed Acyclic Graph (DAG), often called a computational graph. This graph is created dynamically, or "on the fly," during the forward pass of the network. In this DAG:

Nodes are the

torch.Tensorobjects.Edges are the mathematical operations (functions) that take input tensors and produce output tensors.

When a tensor is created with the attribute requires_grad=True, PyTorch begins to track its history. Every operation involving this tensor creates a new node in the graph, and the output tensor stores a reference to the function that created it via its grad_fn attribute. This chain of grad_fn references forms the backward graph, which is essential for differentiation.

The process of learning is initiated by calling the .backward() method, typically on a scalar tensor that represents the model's error, or loss. This single command triggers the backpropagation algorithm.

autograd traverses the computational graph backward from the loss node, applying the chain rule of calculus at each step. It calculates the gradient of the loss with respect to every intermediate tensor and ultimately with respect to the model's parameters (the "leaf" tensors in the graph that were created with requires_grad=True).

A crucial and often misunderstood behavior of autograd is gradient accumulation. When .backward() is called, the newly computed gradients for each parameter are added to the value already stored in that parameter's .grad attribute. They are not overwritten. This design is useful for advanced scenarios like training with gradient accumulation to simulate larger batch sizes. However, for a standard training loop, it means that gradients from the previous iteration will interfere with the current one if they are not explicitly cleared. This is why it is an absolute necessity to call

optimizer.zero_grad() before each training step.

Finally, while gradient tracking is essential for training, it is unnecessary and computationally wasteful during inference or evaluation. PyTorch provides context managers like torch.no_grad() or the more modern and preferred torch.inference_mode() to disable gradient calculation within a block of code. This significantly speeds up computations and reduces memory usage, as PyTorch does not need to build the backward graph.

The dynamic nature of the computational graph is arguably PyTorch's most defining feature. Because the graph is defined by the Python code as it runs (a concept known as eager execution ), developers can use standard Python control flow statements like

if-else conditions and for loops directly within their model's forward method. The graph structure can change with every pass, depending on the input data. This provides immense flexibility, particularly for research into novel architectures with dynamic components. Furthermore, it makes debugging incredibly straightforward. An error occurs exactly where the Python code indicates, allowing developers to use standard Python debuggers to inspect tensor values, shapes, and gradients at any point in the execution, a significant advantage over static graph frameworks where the graph is defined first, compiled, and then executed as a black box.

Hands-On Implementation: Gradients in Action

The following examples provide a practical demonstration of autograd's functionality.

Simple Derivative Example This demonstrates the most basic use of autograd to compute the derivative of y=x2, which is 2x. At x=3, the derivative should be 6.

Python

import torch

# Create a tensor with gradient tracking enabled

x = torch.tensor(3.0, requires_grad=True)

print(f"x: {x}")

# Perform an operation

y = x ** 2

print(f"y = x**2: {y}")

print(f"y's grad_fn: {y.grad_fn}") # Shows the function that created y

# Calculate gradients via backpropagation

y.backward()

# Inspect the gradient of x

print(f"Gradient of y with respect to x (dy/dx): {x.grad}") # Should be 6.0

Multi-variable Example This example from the official documentation computes gradients for a function with multiple variables, Q=3a3−b2.

Python

a = torch.tensor([2., 3.], requires_grad=True)

b = torch.tensor([6., 4.], requires_grad=True)

Q = 3*a**3 - b**2

# Since Q is a vector, we need to provide a gradient argument to backward()

# to specify the gradient of the final function w.r.t Q. For simplicity, we use a vector of ones.

external_grad = torch.tensor([1., 1.])

Q.backward(gradient=external_grad)

# Check if the computed gradients are correct

# dQ/da = 9a^2

print(f"Gradient of Q w.r.t a: {a.grad}")

print(f"Expected gradient for a: {9*a**2}")

# dQ/db = -2b

print(f"Gradient of Q w.r.t b: {b.grad}")

print(f"Expected gradient for b: {-2*b}")

Gradient Accumulation Demonstration This code block explicitly shows how gradients accumulate if not zeroed out.

Python

x_accum = torch.tensor(2.0, requires_grad=True)

y_accum = x_accum ** 2

# First backward pass

y_accum.backward()

print(f"x.grad after first backward pass: {x_accum.grad}") # Expected: 4.0

# Second backward pass on the same graph

# Note: In most real cases, you'd need retain_graph=True, but here it's simple enough.

y_accum = x_accum ** 2 # Re-define to create a new graph path for demonstration

y_accum.backward()

print(f"x.grad after second backward pass (without zeroing): {x_accum.grad}") # Expected: 8.0 (4.0 + 4.0)

# The correct way: zero the gradients

if x_accum.grad is not None:

x_accum.grad.zero_()

y_accum = x_accum ** 2

y_accum.backward()

print(f"x.grad after zeroing and new backward pass: {x_accum.grad}") # Expected: 4.0

Manual Linear Regression with Autograd This exercise solidifies the entire process by manually implementing a training loop before introducing higher-level abstractions.

Python

# 1. Create data

X = torch.tensor([[1.0], [2.0], [3.0], [4.0]])

y = torch.tensor([[2.0], [4.0], [6.0], [8.0]]) # y = 2 * X

# 2. Initialize parameters with gradient tracking

w = torch.tensor([[0.0]], requires_grad=True)

b = torch.tensor([[0.0]], requires_grad=True)

learning_rate = 0.01

n_iters = 20

# 3. Training loop

for epoch in range(n_iters):

# Forward pass: compute predicted y

y_pred = X @ w + b

# Compute loss (Mean Squared Error)

loss = torch.mean((y_pred - y)**2)

# Backward pass: compute gradients of loss w.r.t. w and b

loss.backward()

# Update weights manually, inside a no_grad() block

with torch.no_grad():

w -= learning_rate * w.grad

b -= learning_rate * b.grad

# Manually zero the gradients for the next iteration

w.grad.zero_()

b.grad.zero_()

if (epoch + 1) % 5 == 0:

print(f'Epoch {epoch+1}: loss = {loss.item():.4f}, w = {w.item():.3f}, b = {b.item():.3f}')

print(f"\nFinal prediction after training: w = {w.item():.3f}, b = {b.item():.3f}")

Day 3: The PyTorch Workflow: Building Your First Neural Network

Objective: To synthesize the concepts from Days 1 and 2 into the standard, end-to-end PyTorch workflow. This day focuses on building, training, and evaluating a complete neural network using the essential torch.nn and torch.optim modules, which provide the high-level abstractions necessary for efficient and organized model development.

Theoretical Knowledge: The Building Blocks of a Model

While it is possible to build models using only raw tensor operations and autograd, as demonstrated on Day 2, this approach quickly becomes cumbersome for complex architectures. PyTorch provides the torch.nn package to streamline this process.

torch.nn.Module: The Model Container torch.nn.Module is the base class from which all neural network models should inherit. It serves as a container for the network's components and defines its structure. A custom model class typically implements two essential methods:

__init__(): The constructor where the model's layers are defined and initialized. These layers, such asnn.Linearornn.Conv2d, are themselves instances ofnn.Module, allowing for a nested, tree-like structure. Anynn.Moduleassigned as an attribute in the constructor is automatically registered.forward(): This method defines the forward pass of the network. It takes the input data and dictates how it flows through the defined layers to produce an output. Thebackward()pass is handled automatically byautograd; it does not need to be defined here.

torch.nn.Parameter: Trainable Tensors torch.nn.Parameter is a special wrapper class for a torch.Tensor. When a Parameter is assigned as an attribute of an nn.Module, it is automatically added to the list of the module's parameters. This is how the model.parameters() method is able to find all the trainable weights and biases of a model, which are then passed to the optimizer for updates. Layers like

nn.Linear internally define their weights and biases as nn.Parameter.

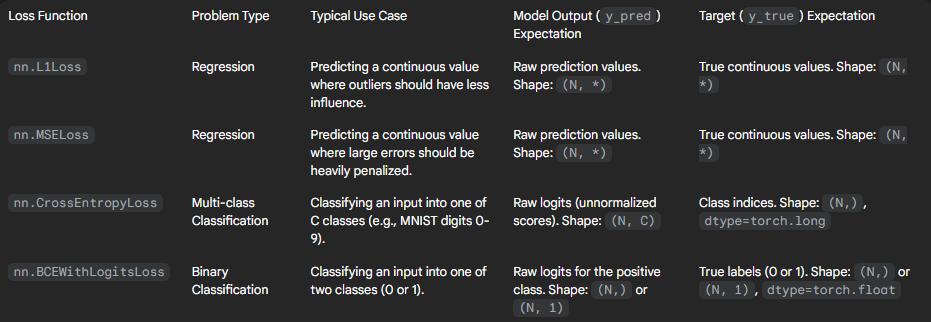

Loss Functions (torch.nn) The torch.nn module provides a suite of common, pre-built loss functions that measure the discrepancy between the model's predictions and the ground truth labels. Choosing the correct loss function is critical for successful training. Common choices include :

nn.L1Loss(Mean Absolute Error): Often used for regression tasks.nn.MSELoss(Mean Squared Error): The most common loss for regression tasks.nn.CrossEntropyLoss: The standard choice for multi-class classification. It conveniently combinesnn.LogSoftmaxandnn.NLLLoss, so it expects raw, unnormalized scores (logits) from the model.nn.BCEWithLogitsLoss: Used for binary classification or multi-label classification. It also expects raw logits and is more numerically stable than using aSigmoidlayer followed bynn.BCELoss.

Optimizers (torch.optim) The torch.optim package provides implementations of various optimization algorithms that define how the model's parameters are updated based on the computed gradients. The optimizer encapsulates the update logic (e.g., stochastic gradient descent). To construct an optimizer, one must provide it with the model's parameters to be updated (retrieved via model.parameters()) and algorithm-specific hyperparameters, such as the learning rate (lr). The most basic and foundational optimizer is

torch.optim.SGD.

Hands-On Implementation: The Full Training Loop

This section refactors the manual linear regression model from Day 2 into the standard, modular PyTorch workflow. This pattern is the foundation for nearly all deep learning projects in PyTorch.

Step 1: Data Preparation First, prepare the data and split it into training and test sets. For reproducibility, a random seed is set.

Python

import torch

from torch import nn

# Create known parameters

weight = 0.7

bias = 0.3

# Create data

start = 0

end = 1

step = 0.02

X = torch.arange(start, end, step).unsqueeze(dim=1)

y = weight * X + bias

# Split data into 80% training, 20% testing

train_split = int(0.8 * len(X))

X_train, y_train = X[:train_split], y[:train_split]

X_test, y_test = X[train_split:], y[train_split:]

print(f"Training samples: {len(X_train)}, Test samples: {len(X_test)}")

Step 2: Build the Model Define the model by subclassing nn.Module and using the nn.Linear layer.

Python

class LinearRegressionModelV2(nn.Module):

def __init__(self):

super().__init__()

# Use the built-in nn.Linear layer for weights and bias

self.linear_layer = nn.Linear(in_features=1, out_features=1)

def forward(self, x: torch.Tensor) -> torch.Tensor:

return self.linear_layer(x)

# Set the manual seed for reproducibility of initial weights

torch.manual_seed(42)

model_v2 = LinearRegressionModelV2()

# Inspect the randomly initialized parameters

print("\nInitial model parameters:")

print(model_v2.state_dict())

Step 3: Define Loss and Optimizer Set up the loss function and the optimizer, passing the model's parameters to the optimizer.

Python

loss_fn = nn.L1Loss() # MAE Loss

optimizer = torch.optim.SGD(params=model_v2.parameters(), lr=0.01)

Step 4: The Training & Evaluation Loop This is the canonical training loop in PyTorch. Each step is essential for correct and efficient training. A critical and often overlooked detail is the use of model.train() and model.eval(). These methods set the module in training or evaluation mode, respectively. While not impactful for a simple linear layer, they are crucial for layers like nn.Dropout and nn.BatchNorm2d, which have different behaviors during training and inference. Forgetting to toggle these modes is a common source of bugs that leads to non-reproducible or suboptimal results, as dropout might remain active during evaluation, or batch normalization might use incorrect statistics. Managing the model's state explicitly is a hallmark of a professional workflow.

Python

torch.manual_seed(42)

epochs = 200

# Set up device-agnostic code

device = "cuda" if torch.cuda.is_available() else "cpu"

model_v2.to(device)

X_train, y_train = X_train.to(device), y_train.to(device)

X_test, y_test = X_test.to(device), y_test.to(device)

for epoch in range(epochs):

### Training Phase

model_v2.train() # Set the model to training mode

# 1. Forward pass

y_preds = model_v2(X_train)

# 2. Calculate the loss

loss = loss_fn(y_preds, y_train)

# 3. Zero the optimizer's gradients

optimizer.zero_grad()

# 4. Perform backpropagation to calculate gradients

loss.backward()

# 5. Step the optimizer to update parameters

optimizer.step()

### Evaluation Phase

model_v2.eval() # Set the model to evaluation mode

with torch.inference_mode(): # Use inference_mode for efficiency

test_preds = model_v2(X_test)

test_loss = loss_fn(test_preds, y_test)

if epoch % 20 == 0:

print(f"Epoch: {epoch} | Train Loss: {loss:.4f} | Test Loss: {test_loss:.4f}")

# Inspect the final learned parameters

print("\nFinal model parameters:")

print(model_v2.state_dict())

Step 5: Making Predictions & Saving the Model After training, the model can be used for inference and its state can be saved for later use.

Python

# Make predictions

model_v2.eval()

with torch.inference_mode():

final_preds = model_v2(X_test)

# Save the model state dictionary

MODEL_PATH = "linear_model_v2.pth"

torch.save(obj=model_v2.state_dict(), f=MODEL_PATH)

print(f"\nModel state saved to {MODEL_PATH}")

# Load the saved state dictionary into a new model instance

loaded_model = LinearRegressionModelV2()

loaded_model.load_state_dict(torch.load(f=MODEL_PATH))

loaded_model.to(device)

print("Model loaded successfully.")

Table: Common PyTorch Loss Functions

A frequent point of confusion for developers is selecting the appropriate loss function and ensuring the model's output and target labels are in the correct format. The following table serves as a quick-reference guide to prevent common errors and accelerate project setup.

Part II: Architectures and Applications (Days 4-10)

With the foundational workflow established, this week is dedicated to implementation. The focus shifts to building the most important deep learning architectures from scratch, applying them to real-world problems in computer vision and natural language processing. Each day builds on the last, adding complexity and introducing new, powerful concepts that form the modern deep learning toolkit.

Day 4: Mastering Data with Dataset and DataLoader

Objective: To master PyTorch's data loading utilities, torch.utils.data.Dataset and torch.utils.data.DataLoader, to create efficient, scalable, and reusable data input pipelines.

Theoretical Knowledge: Decoupling Data Logic

A robust and efficient data pipeline is the lifeblood of any successful machine learning project. PyTorch provides a powerful and elegant abstraction for this through its Dataset and DataLoader classes. This design is a prime example of the "Separation of Concerns" software engineering principle applied to machine learning. The Dataset is concerned with data representation and access, while the DataLoader is concerned with data sampling and iteration strategy. This decoupling is the key to building scalable and maintainable systems.

The Dataset Class The torch.utils.data.Dataset is an abstract class that represents a dataset. To create a custom dataset, one simply needs to subclass it and override two key methods :

__len__(): This method should return the total number of samples in the dataset. TheDataLoaderuses this to know the size of the dataset.__getitem__(self, idx): This method is responsible for loading and returning a single sample from the dataset given an indexidx. All the logic for reading a file from disk, accessing a database, or applying transformations to the data resides here. This design supports lazy loading, where data is only loaded into memory when it is requested, which is essential for handling datasets that are too large to fit into RAM.

The DataLoader Class The torch.utils.data.DataLoader wraps a Dataset and provides an iterable over it. It automates the complex and often boilerplate logic of data handling for training :

Batching (

batch_size): It groups individual samples from theDatasetinto mini-batches, which is necessary for stable and efficient training with stochastic gradient descent.Shuffling (

shuffle=True): At the beginning of each epoch, it randomly shuffles the indices of the data before creating batches. This is crucial to prevent the model from learning the order of the data and to ensure that the gradients are representative of the overall dataset.Parallel Loading (

num_workers): This is a critical performance feature. By settingnum_workersto a value greater than 0, theDataLoaderspawns multiple subprocesses to load data in parallel. This ensures that data preprocessing on the CPU can keep up with the model's computations on the GPU, preventing the GPU from sitting idle while waiting for data.Pinned Memory (

pin_memory=True): When training on a GPU, setting this toTruetells theDataLoaderto copy tensors into a special "pinned" memory region on the CPU. This can significantly speed up the data transfer from CPU to GPU memory.

Transforms (torchvision.transforms) Data augmentation and normalization are critical steps in any computer vision pipeline. These operations are typically defined using torchvision.transforms and applied within the Dataset's __getitem__ method. This ensures that transformations, especially random ones for augmentation, are applied on-the-fly to each sample as it is loaded.

Hands-On Implementation: A Custom Image Dataset

This example demonstrates how to create a custom Dataset for a typical image classification task where images are organized in folders by class.

Step 1: Setup First, download and organize an image dataset. A common structure is a root directory with train and test subdirectories, each containing folders named after the classes (e.g., train/cat/, train/dog/).

Step 2: Create a Custom Dataset The following class will read images from a directory structure as described above. It demonstrates lazy loading, as images are only opened from disk when __getitem__ is called.

Python

import os

from PIL import Image

from torch.utils.data import Dataset

from torchvision import transforms

class CustomImageDataset(Dataset):

def __init__(self, root_dir, transform=None):

"""

Args:

root_dir (string): Directory with all the images, structured as root_dir/class_name/image.jpg

transform (callable, optional): Optional transform to be applied on a sample.

"""

self.root_dir = root_dir

self.transform = transform

self.classes = sorted(os.listdir(root_dir))

self.class_to_idx = {cls_name: i for i, cls_name in enumerate(self.classes)}

self.samples = self._make_dataset()

def _make_dataset(self):

images =

for class_name in self.classes:

class_dir = os.path.join(self.root_dir, class_name)

if not os.path.isdir(class_dir):

continue

for img_name in sorted(os.listdir(class_dir)):

path = os.path.join(class_dir, img_name)

item = (path, self.class_to_idx[class_name])

images.append(item)

return images

def __len__(self):

return len(self.samples)

def __getitem__(self, idx):

img_path, label = self.samples[idx]

try:

image = Image.open(img_path).convert("RGB")

except (IOError, OSError):

print(f"Warning: Could not load image {img_path}. Skipping.")

# Return a placeholder or handle appropriately

return self.__getitem__((idx + 1) % len(self))

if self.transform:

image = self.transform(image)

return image, label

Step 3: Define Transformations Define a pipeline of transformations for data augmentation and normalization. For models pre-trained on ImageNet, it is standard practice to use its normalization statistics.

Python

data_transform = transforms.Compose(, # Normalize with ImageNet stats

std=[0.229, 0.224, 0.225])

])

Step 4: Instantiate Dataset and DataLoader Create instances of the custom dataset and wrap them with DataLoader.

Python

from torch.utils.data import DataLoader

# Assume 'data/train' is the path to the training images

train_dataset = CustomImageDataset(root_dir='data/train', transform=data_transform)

train_dataloader = DataLoader(train_dataset,

batch_size=32,

shuffle=True,

num_workers=4, # Use 4 CPU cores for parallel loading

pin_memory=True) # Speeds up CPU to GPU transfer

Step 5: Iterate and Visualize Iterate through one batch to verify that the pipeline is working correctly.

Python

# Fetch one batch of data

images, labels = next(iter(train_dataloader))

print(f"Images batch shape: {images.shape}") # Should be

print(f"Labels batch shape: {labels.shape}") # Should be

This confirms that the DataLoader is yielding batches of the correct shape and type, ready to be fed into a neural network.

Day 5: Convolutional Neural Networks (CNNs) for Computer Vision - Part 1

Objective: To understand the theory behind Convolutional Neural Networks (CNNs) and build a simple CNN from scratch in PyTorch to solve a classic image classification problem.

Theoretical Knowledge: The Power of Convolutions

For tasks involving grid-like data such as images, standard fully connected networks (or Multi-Layer Perceptrons) have two major weaknesses. First, they require flattening the 2D or 3D image into a 1D vector, which destroys the crucial spatial structure of the pixels. Pixels that are close together in the image are highly correlated, and this information is lost. Second, they result in an explosion of parameters. A simple 224x224 color image would require over 150,000 input neurons, leading to millions of weights in just the first hidden layer, making the model prone to overfitting and computationally expensive.

Convolutional Neural Networks (CNNs) were designed specifically to overcome these issues by leveraging two key ideas: sparse connectivity and parameter sharing. Instead of connecting every input neuron to every hidden neuron, a CNN uses small filters (kernels) that connect to only a small, local region of the input image at a time. This filter then slides, or convolves, across the entire image, sharing the same set of weights at every location. This process allows the network to learn features like edges or textures in a translation-invariant manner—it doesn't matter where in the image the feature appears.

The core components of a modern CNN architecture are :

Convolutional Layer (

nn.Conv2d): This is the workhorse of the CNN. It learns feature detectors (filters). Its key parameters are:in_channels: The number of channels in the input image (e.g., 1 for grayscale, 3 for RGB).out_channels: The number of filters to apply, which determines the depth of the output feature map.kernel_size: The dimensions of the filter (e.g., 3 for a 3x3 filter).stride: The number of pixels the filter moves at each step.padding: Zero-padding added to the borders of the input to control the output size.

Activation Function (

nn.ReLU): After each convolution, a non-linear activation function is applied. The Rectified Linear Unit (ReLU), which simply outputsmax(0, x), is the standard choice because it is computationally efficient and helps mitigate the vanishing gradient problem.Pooling Layer (

nn.MaxPool2d): This layer performs down-sampling. It reduces the spatial dimensions (height and width) of the feature maps, which reduces the number of parameters and computation in the network. It also helps to make the learned features more robust to small translations and distortions in the input image.Fully Connected Layer (

nn.Linear): After several convolutional and pooling layers have extracted hierarchical features from the image, the final feature maps are flattened into a 1D vector and fed into one or more fully connected layers, which perform the final classification.

Hands-On Implementation: CNN for MNIST

The MNIST handwritten digit dataset is the canonical "Hello, World!" for computer vision. We will build a simple CNN to classify its images.

Step 1: Data Loading PyTorch's torchvision provides easy access to the MNIST dataset. We will create DataLoaders for training and testing, applying transformations to convert images to tensors and normalize their pixel values.

Python

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision.transforms import ToTensor, Normalize, Compose

# Define transformations

transform = Compose()

# Download and load the training data

train_data = datasets.MNIST(

root="data",

train=True,

download=True,

transform=transform,

)

# Download and load the test data

test_data = datasets.MNIST(

root="data",

train=False,

download=True,

transform=transform,

)

# Create DataLoaders

BATCH_SIZE = 64

train_dataloader = DataLoader(train_data, batch_size=BATCH_SIZE, shuffle=True)

test_dataloader = DataLoader(test_data, batch_size=BATCH_SIZE, shuffle=False)

Step 2: Build the CNN Model The transition from the final convolutional block to the first linear classifier via nn.Flatten() is a common point of failure for beginners due to shape mismatches. Calculating the correct in_features for the nn.Linear layer is a critical skill. The number of input features is the product of the channels, height, and width of the tensor exiting the final convolutional/pooling layer. While this can be calculated manually, a robust programmatic trick is to perform a "dummy forward pass" with a sample input through the convolutional part of the model and inspect the output shape. This leverages PyTorch's eager execution to get a definitive answer, making model construction less error-prone.

Python

class MNIST_CNN(nn.Module):

def __init__(self):

super().__init__()

# First convolutional block

self.conv_block_1 = nn.Sequential(

nn.Conv2d(in_channels=1, out_channels=16, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2) # Output: 16 x 14 x 14

)

# Second convolutional block

self.conv_block_2 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=32, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2) # Output: 32 x 7 x 7

)

# Classifier

self.classifier = nn.Sequential(

nn.Flatten(), # Flattens the 32x7x7 tensor to a vector

nn.Linear(in_features=32 * 7 * 7, out_features=10) # 10 classes for digits 0-9

)

def forward(self, x: torch.Tensor):

x = self.conv_block_1(x)

x = self.conv_block_2(x)

x = self.classifier(x)

return x

# Instantiate the model

model_cnn = MNIST_CNN()

print(model_cnn)

Step 3: Training and Evaluation We use the standard training loop developed on Day 3, now applied to our CNN and the MNIST DataLoaders. We will use nn.CrossEntropyLoss as it's a multi-class classification problem, and the Adam optimizer, which is a popular and effective choice.

Python

# Setup device, loss function, and optimizer

device = "cuda" if torch.cuda.is_available() else "cpu"

model_cnn.to(device)

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(params=model_cnn.parameters(), lr=0.001)

# Training loop function

def train_step(model, data_loader, loss_fn, optimizer, device):

model.train()

train_loss, train_acc = 0, 0

for batch, (X, y) in enumerate(data_loader):

X, y = X.to(device), y.to(device)

y_pred = model(X)

loss = loss_fn(y_pred, y)

train_loss += loss.item()

optimizer.zero_grad()

loss.backward()

optimizer.step()

y_pred_class = torch.argmax(torch.softmax(y_pred, dim=1), dim=1)

train_acc += (y_pred_class == y).sum().item()/len(y_pred)

return train_loss / len(data_loader), train_acc / len(data_loader)

# Evaluation loop function

def test_step(model, data_loader, loss_fn, device):

model.eval()

test_loss, test_acc = 0, 0

with torch.inference_mode():

for X, y in data_loader:

X, y = X.to(device), y.to(device)

test_pred = model(X)

loss = loss_fn(test_pred, y)

test_loss += loss.item()

test_pred_labels = test_pred.argmax(dim=1)

test_acc += ((test_pred_labels == y).sum().item()/len(test_pred_labels))

return test_loss / len(data_loader), test_acc / len(data_loader)

# Main training process

epochs = 5

for epoch in range(epochs):

train_loss, train_acc = train_step(model_cnn, train_dataloader, loss_fn, optimizer, device)

test_loss, test_acc = test_step(model_cnn, test_dataloader, loss_fn, device)

print(f"Epoch: {epoch+1} | Train loss: {train_loss:.4f}, Train acc: {train_acc:.4f} | Test loss: {test_loss:.4f}, Test acc: {test_acc:.4f}")

Step 4: Visualize Results After training, a qualitative evaluation involves visualizing the model's predictions on test images. This provides a tangible sense of its performance beyond just accuracy metrics. A function can be created to take a batch of test images, get the model's predictions, and plot the images with their predicted and true labels for comparison.

Day 6: CNNs for Computer Vision - Part 2: Advanced Techniques

Objective: To build upon the simple CNN by tackling a more challenging dataset (CIFAR-10) and introducing essential regularization techniques (Dropout, BatchNorm2d) and architectural improvements to build a more powerful and generalizable model.

Theoretical Knowledge: Fighting Overfitting and Stabilizing Training

As models become deeper and more complex, they gain the capacity to memorize the training data instead of learning generalizable patterns. This phenomenon is known as overfitting. It is typically identified when the model's performance on the training set continues to improve, while its performance on a held-out validation or test set stagnates or degrades. Plotting the training and validation loss curves over epochs is a standard way to diagnose this; a widening gap between the two curves is a clear sign of overfitting. To combat this and improve model robustness, several techniques are employed.

Dropout (nn.Dropout) Dropout is a simple yet remarkably effective regularization technique. During each forward pass in the training phase, it randomly sets a fraction of neuron activations to zero with a specified probability p. This prevents neurons from becoming overly reliant on the presence of specific other neurons, forcing the network to learn more distributed and robust feature representations. At evaluation time (model.eval() mode), dropout is deactivated, and all activations are used (scaled appropriately to account for the dropped units during training) to make predictions.

Batch Normalization (nn.BatchNorm2d) Batch Normalization is a technique designed to stabilize and accelerate the training process. It normalizes the activations of a layer for each mini-batch by subtracting the batch mean and dividing by the batch standard deviation. It then learns two additional parameters, a scale (γ) and a shift (β), to allow the network to learn the optimal distribution for the activations. This process addresses the problem of "internal covariate shift," where the distribution of each layer's inputs changes during training as the parameters of the previous layers change. By maintaining a more stable distribution of activations, batch norm allows for higher learning rates, acts as a form of regularization, and makes the network less sensitive to weight initialization.

The order of operations for these layers is a subject of empirical study, but a common and highly effective pattern is Conv -> BatchNorm -> ReLU. Applying BatchNorm before the ReLU activation normalizes the raw outputs of the convolution, ensuring that the inputs to the activation function are in a stable, well-behaved range. This prevents the ReLU from receiving values that are too large or small, which could hinder learning. Dropout is then typically applied after the activation.

Hands-On Implementation: A Deeper CNN for CIFAR-10

The CIFAR-10 dataset consists of 32x32 color images across 10 classes, presenting a greater challenge than MNIST due to the increased complexity and variation in the images.

Step 1: Data Loading We use torchvision.datasets.CIFAR10. The key differences from MNIST are that the input images have 3 channels (RGB) and require different normalization statistics.

Python

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

# CIFAR-10 images are 3-channel, and we use standard normalization stats

transform = transforms.Compose()

train_data = datasets.CIFAR10(root="data", train=True, download=True, transform=transform)

test_data = datasets.CIFAR10(root="data", train=False, download=True, transform=transform)

# Create a validation split from the training data

train_size = int(0.9 * len(train_data))

val_size = len(train_data) - train_size

train_dataset, val_dataset = torch.utils.data.random_split(train_data, [train_size, val_size])

# Create DataLoaders

BATCH_SIZE = 64

train_loader = DataLoader(train_dataset, batch_size=BATCH_SIZE, shuffle=True)

val_loader = DataLoader(val_dataset, batch_size=BATCH_SIZE, shuffle=False)

test_loader = DataLoader(test_data, batch_size=BATCH_SIZE, shuffle=False)

Step 2: Build an Improved CNN This deeper architecture incorporates BatchNorm2d and Dropout layers to improve performance and generalization.

Python

class CIFAR10_CNN(nn.Module):

def __init__(self):

super().__init__()

self.network = nn.Sequential(

# Input: 3 x 32 x 32

nn.Conv2d(3, 32, kernel_size=3, padding=1),

nn.BatchNorm2d(32),

nn.ReLU(),

nn.Conv2d(32, 64, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(),

nn.MaxPool2d(2, 2), # Output: 64 x 16 x 16

nn.Dropout(0.25),

nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(128),

nn.ReLU(),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(128),

nn.ReLU(),

nn.MaxPool2d(2, 2), # Output: 128 x 8 x 8

nn.Dropout(0.25),

nn.Flatten(),

nn.Linear(128 * 8 * 8, 512),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(512, 10)

)

def forward(self, x: torch.Tensor):

return self.network(x)

model_cifar = CIFAR10_CNN()

Step 3: Training with Validation The training loop is modified to include an evaluation step on the validation set after each epoch. This allows for monitoring of overfitting.

Python

# Setup device, model, loss, and optimizer

device = "cuda" if torch.cuda.is_available() else "cpu"

model_cifar.to(device)

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model_cifar.parameters(), lr=0.001)

# Lists to store loss history for plotting

train_loss_history =

val_loss_history =

epochs = 15

for epoch in range(epochs):

# Training step (using function from Day 5, adapted for this model)

train_loss, train_acc = train_step(model_cifar, train_loader, loss_fn, optimizer, device)

# Validation step

val_loss, val_acc = test_step(model_cifar, val_loader, loss_fn, device) # Reusing test_step for validation

train_loss_history.append(train_loss)

val_loss_history.append(val_loss)

print(f"Epoch: {epoch+1} | Train Loss: {train_loss:.4f}, Train Acc: {train_acc:.2f}% | Val Loss: {val_loss:.4f}, Val Acc: {val_acc:.2f}%")

Step 4: Plotting Loss Curves Visualizing the training and validation loss is essential for understanding the training dynamics.

Python

import matplotlib.pyplot as plt

plt.figure(figsize=(10, 5))

plt.title("Training and Validation Loss")

plt.plot(train_loss_history, label="train")

plt.plot(val_loss_history, label="val")

plt.xlabel("Epochs")

plt.ylabel("Loss")

plt.legend()

plt.show()

A healthy plot will show both curves decreasing and converging. If the training loss continues to decrease while the validation loss flattens or increases, the model is overfitting.

Day 7: Transfer Learning: Standing on the Shoulders of Giants

Objective: To master transfer learning, one of the most impactful techniques in modern deep learning. This section covers how to leverage pre-trained models from torchvision.models to achieve high performance on new tasks with significantly less data and computation.

Theoretical Knowledge: The Two Strategies of Transfer Learning

Training a deep CNN from scratch requires a massive amount of labeled data and significant computational resources. However, models trained on large, general datasets like ImageNet (with over a million images across 1000 classes) learn a rich hierarchy of visual features—from simple edges and textures in the early layers to complex object parts in deeper layers. Transfer learning is the practice of reusing these learned features for a new task. This approach can lead to better performance, faster convergence, and a drastic reduction in the amount of data needed for the target task.

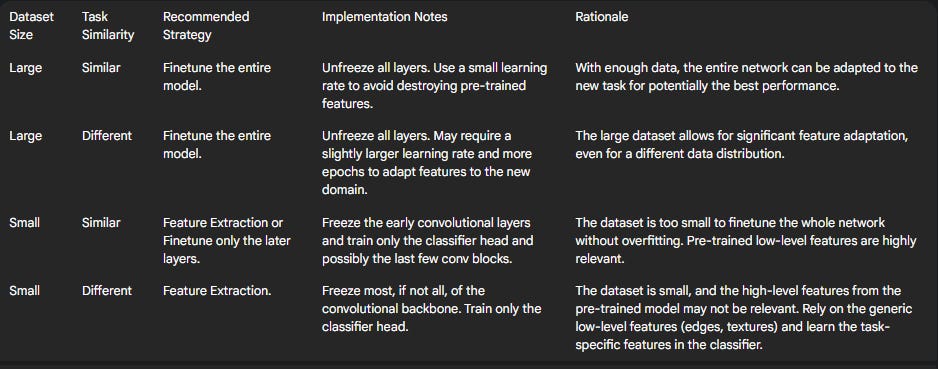

There are two primary strategies for transfer learning :

Finetuning: This strategy involves loading a pre-trained model and unfreezing all of its parameters. The final classification layer (the "head") is replaced with a new one tailored to the number of classes in the new task. The entire network is then trained on the new dataset, typically with a small learning rate. This allows the pre-trained features to be "fine-tuned" or adapted to the specifics of the new data. This is the most common approach when the new dataset is reasonably large and similar to the original dataset.

Feature Extraction: In this strategy, the pre-trained model is used as a fixed feature extractor. The weights of the convolutional base (the "backbone") are frozen, meaning they will not be updated during training. Only the parameters of the newly added classification head are trained. This approach is ideal when the new dataset is very small, as training the entire network would likely lead to severe overfitting.

The choice between these strategies depends on the size of the new dataset and its similarity to the original dataset (e.g., ImageNet). An engineer must make a strategic decision to adapt the pre-trained model effectively, moving beyond simply using it as a black box.

Table: Transfer Learning Strategy Guide

The following table provides a decision framework for choosing the appropriate transfer learning strategy based on dataset size and task similarity.

Hands-On Implementation: Classifying Ants vs. Bees

We will use the hymenoptera_data dataset, a small dataset of ant and bee images, which is perfectly suited for demonstrating the power of transfer learning.

Step 1: Data Preparation Load the data using ImageFolder. The transformations are critical: images must be resized to 224x224 and normalized with the ImageNet statistics, as this is what the pre-trained ResNet model expects.

Python

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader

from torchvision import datasets, models, transforms

device = "cuda" if torch.cuda.is_available() else "cpu"

data_transforms = {

'train': transforms.Compose(, [0.229, 0.224, 0.225])

]),

'val': transforms.Compose(, [0.229, 0.224, 0.225])

]),

}

data_dir = 'data/hymenoptera_data'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'val']}

dataloaders = {x: DataLoader(image_datasets[x], batch_size=4, shuffle=True, num_workers=4) for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

Step 2: Implementing Feature Extraction Here, we freeze the backbone and only train the new classifier head.

Python

# Load pre-trained ResNet18

model_extractor = models.resnet18(weights='IMAGENET1K_V1')

# Freeze all parameters in the model

for param in model_extractor.parameters():

param.requires_grad = False

# Replace the final fully connected layer

num_ftrs = model_extractor.fc.in_features

model_extractor.fc = nn.Linear(num_ftrs, len(class_names)) # len(class_names) is 2

model_extractor = model_extractor.to(device)

# Define loss and optimizer (only for the classifier parameters)

criterion = nn.CrossEntropyLoss()

optimizer_extractor = optim.SGD(model_extractor.fc.parameters(), lr=0.001, momentum=0.9)

# Train the model (using a generic train_model function)

# model_extractor = train_model(model_extractor, criterion, optimizer_extractor,...)

Notice that model_extractor.fc.parameters() is passed to the optimizer, ensuring only the new layer's weights are updated.

Step 3: Implementing Finetuning Here, we allow all parameters to be updated during training.

Python

# Load pre-trained ResNet18

model_finetune = models.resnet18(weights='IMAGENET1K_V1')

# Replace the final fully connected layer

num_ftrs = model_finetune.fc.in_features

model_finetune.fc = nn.Linear(num_ftrs, len(class_names))

model_finetune = model_finetune.to(device)

# Define loss and optimizer (for all model parameters)

criterion = nn.CrossEntropyLoss()

optimizer_finetune = optim.SGD(model_finetune.parameters(), lr=0.001, momentum=0.9)

# Train the model

# model_finetune = train_model(model_finetune, criterion, optimizer_finetune,...)

In this case, model_finetune.parameters() is passed to the optimizer, making all weights trainable. After training both models, their validation accuracies can be compared to see the effect of each strategy on this specific task.

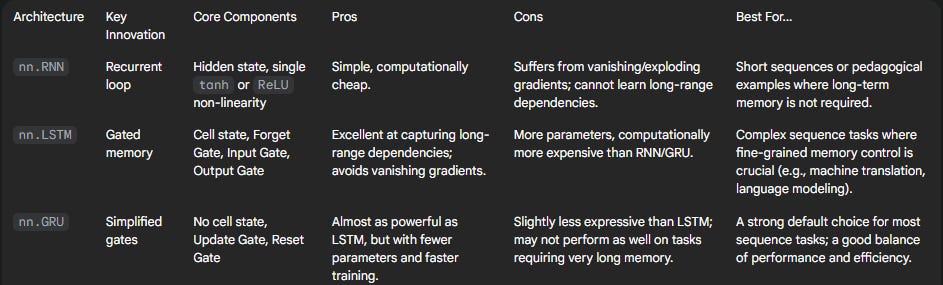

Day 8: Recurrent Neural Networks (RNNs) for Sequential Data

Objective: To understand and implement Recurrent Neural Networks (RNNs), a class of neural networks designed specifically for processing sequential data where the order of information is critical.

Theoretical Knowledge: The Concept of Memory

Feed-forward networks like MLPs and CNNs have a fundamental limitation: they assume that all inputs are independent of each other. They have no inherent mechanism to remember past inputs, which makes them unsuitable for tasks where context and order are paramount, such as understanding language, forecasting time series, or analyzing speech.

Recurrent Neural Networks (RNNs) solve this by introducing a loop. The core idea is that the network's output at a given time step depends not only on the current input but also on the outputs from previous time steps. This is achieved through a hidden state, which acts as a form of memory. At each step in the sequence, the RNN takes the current input element and the hidden state from the previous step, processes them, and produces an output and a new hidden state. This new hidden state, which is a compressed representation of the entire history seen so far, is then passed to the next time step.

The evolution of this hidden state over time is how the network learns context. For example, when processing the phrase "New York," the hidden state passed from processing "New" carries the information that "York" is likely part of a proper noun. However, this simple recurrent mechanism is also the source of the RNN's greatest weakness: the vanishing gradient problem. During backpropagation, gradients must flow backward through time, across every step of the recurrent calculation. For long sequences, these gradients are repeatedly multiplied by the recurrent weight matrix. If the values in this matrix are small, the gradients can shrink exponentially until they "vanish," making it impossible for the network to learn long-range dependencies. This critical limitation motivates the need for more advanced architectures like LSTMs and GRUs.

The torch.nn.RNN module in PyTorch encapsulates this logic. Its key parameters include :

input_size: The number of features for each element in the sequence (e.g., the dimension of a word embedding).hidden_size: The number of features in the hidden state.num_layers: The number of RNN layers to stack.batch_first=True: A highly recommended parameter that changes the expected input tensor shape from(seq_len, batch, features)to the more intuitive(batch, seq_len, features).

Hands-On Implementation: Character-level Name Classification

To build a strong intuition for how RNNs work, we will first implement one manually before using the built-in nn.RNN module. We will use the character-level name classification task from the official PyTorch tutorials, where the goal is to predict the language of origin of a name based on its spelling.

Step 1: Data Preparation This involves loading the dataset of names, creating a vocabulary of all unique characters, and writing helper functions to convert a name (string) and a category (string) into one-hot encoded tensors.

Python

# [48]

Step 2: Build the RNN Model Manually This implementation makes the recurrent step explicit, showing how the input and previous hidden state are combined at each step.

Python

import torch.nn as nn

class CharRNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(CharRNN, self).__init__()

self.hidden_size = hidden_size

# Linear layer to combine input and hidden state

self.i2h = nn.Linear(input_size + hidden_size, hidden_size)

# Linear layer to produce output from combined input and hidden state

self.i2o = nn.Linear(input_size + hidden_size, output_size)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input_tensor, hidden_tensor):

combined = torch.cat((input_tensor, hidden_tensor), 1)

hidden = self.i2h(combined)

output = self.i2o(combined)

output = self.softmax(output)

return output, hidden

def initHidden(self):

# Initialize hidden state with zeros

return torch.zeros(1, self.hidden_size)

# Example instantiation

# n_letters = number of unique characters in vocabulary

# n_categories = number of languages

# rnn = CharRNN(n_letters, n_hidden, n_categories)

Step 3: Training Loop The training loop for this manual RNN iterates through each character of a name.

Python

# Conceptual training loop for one name

# criterion = nn.NLLLoss()

# learning_rate = 0.005

# hidden = rnn.initHidden()

# rnn.zero_grad()

# for i in range(line_tensor.size()): # Loop over characters

# output, hidden = rnn(line_tensor[i], hidden)

# loss = criterion(output, category_tensor)

# loss.backward()

# # Update weights

# for p in rnn.parameters():

# p.data.add_(p.grad.data, alpha=-learning_rate)

Step 4: Refactor with nn.RNN After understanding the manual process, we can refactor the model to use the efficient, built-in nn.RNN layer, which handles the loop over the sequence internally.

Python

class RNNRefactored(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNNRefactored, self).__init__()

self.hidden_size = hidden_size

self.rnn = nn.RNN(input_size, hidden_size, batch_first=True)

self.fc = nn.Linear(hidden_size, output_size)

def forward(self, x):

# x shape: (batch_size, seq_len, input_size)

# h0 shape: (num_layers, batch_size, hidden_size)

h0 = torch.zeros(1, x.size(0), self.hidden_size).to(x.device)

# out shape: (batch_size, seq_len, hidden_size)

# hidden shape: (num_layers, batch_size, hidden_size)

out, hidden = self.rnn(x, h0)

# Use the hidden state of the last time step for classification

out = self.fc(hidden.squeeze(0))

return out

This refactored model is far more concise and computationally optimized, especially for batches of sequences.

Day 9: Advanced RNNs: LSTM and GRU

Objective: To overcome the limitations of simple RNNs by implementing and understanding Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks, which are the standard tools for most sequence modeling tasks.

Theoretical Knowledge: The Power of Gates

As established on Day 8, simple RNNs suffer from the vanishing gradient problem, making them incapable of learning dependencies between elements that are far apart in a sequence. LSTMs and GRUs were developed to solve this exact issue. They introduce "gates"—neural networks that regulate the flow of information.

Long Short-Term Memory (LSTM) The LSTM introduces a cell state, which acts as a separate memory "conveyor belt." Information can be added to or removed from the cell state, and this process is controlled by three gates :

Forget Gate: This gate looks at the previous hidden state and the current input and decides which information from the old cell state should be discarded. It outputs a number between 0 (completely forget) and 1 (completely keep) for each number in the cell state.

Input Gate: This gate decides which new information should be stored in the cell state. It has two parts: a sigmoid layer that decides which values to update, and a

tanhlayer that creates a vector of new candidate values.Output Gate: This gate decides what the next hidden state should be. It takes the (filtered) cell state, passes it through a

tanhfunction, and multiplies it by the output of a sigmoid gate to determine which parts of the cell state to output.

This gated mechanism allows the cell state to carry information over very long sequences, effectively mitigating the vanishing gradient problem.

Gated Recurrent Unit (GRU) The GRU is a simplification of the LSTM that often achieves comparable performance with less computational overhead. It merges the cell state and hidden state and uses only two gates:

Update Gate: This gate acts like a combination of the LSTM's forget and input gates. It decides how much of the previous hidden state to keep and how much of the new candidate hidden state to add.

Reset Gate: This gate determines how much of the past information to forget when computing the new candidate hidden state.

Because it has fewer parameters, a GRU is slightly faster to train and can sometimes generalize better on smaller datasets.

The choice between LSTM and GRU is often empirical. LSTM, being more expressive with its separate cell state, might be better for tasks requiring very fine-grained memory control. GRU, being simpler and faster, is an excellent default choice.

Hands-On Implementation: Sentiment Analysis with LSTMs

We will tackle a classic NLP sequence classification task: classifying IMDB movie reviews as positive or negative.

Step 1: Data Preprocessing This involves loading the text data, cleaning it (lowercase, remove punctuation), tokenizing it (splitting into words), building a vocabulary (mapping words to integers), and padding all sequences to a fixed length to enable batching. Libraries like

torchtext or tokenizers from Hugging Face can automate much of this process.

Step 2: Build the LSTM Model The model will consist of an embedding layer, a bidirectional LSTM layer, and a final linear layer for classification.

Python

import torch.nn as nn

class SentimentLSTM(nn.Module):

def __init__(self, vocab_size, embedding_dim, hidden_dim, output_dim, n_layers, bidirectional, dropout):

super().__init__()

# Embedding layer converts word indices to dense vectors

self.embedding = nn.Embedding(vocab_size, embedding_dim)

# LSTM layer

self.lstm = nn.LSTM(embedding_dim,

hidden_dim,

num_layers=n_layers,

bidirectional=bidirectional,

dropout=dropout,

batch_first=True)

# Fully connected layer

# hidden_dim * 2 because the LSTM is bidirectional

self.fc = nn.Linear(hidden_dim * 2, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

# text shape: [batch size, sentence length]

embedded = self.dropout(self.embedding(text))

# embedded shape: [batch size, sentence length, embedding dim]

# packed_output is the output of the LSTM for each time step

# hidden is the final hidden state

# cell is the final cell state

packed_output, (hidden, cell) = self.lstm(embedded)

# Concatenate the final forward (hidden[-2,:,:]) and backward (hidden[-1,:,:]) hidden states

hidden = self.dropout(torch.cat((hidden[-2,:,:], hidden[-1,:,:]), dim=1))

# hidden shape: [batch size, hidden_dim * 2]

return self.fc(hidden)

# Example instantiation

# VOCAB_SIZE = len(vocab)

# EMBEDDING_DIM = 100

# HIDDEN_DIM = 256

# OUTPUT_DIM = 1

# N_LAYERS = 2

# BIDIRECTIONAL = True

# DROPOUT = 0.5

# model = SentimentLSTM(VOCAB_SIZE, EMBEDDING_DIM, HIDDEN_DIM, OUTPUT_DIM, N_LAYERS, BIDIRECTIONAL, DROPOUT)

Step 3: Training and Evaluation The training loop will be similar to previous days. The key difference is the choice of loss function: nn.BCEWithLogitsLoss is used for binary classification. It takes the raw logit output from the model and is more numerically stable than using a Sigmoid layer followed by nn.BCELoss.

Step 4: Switching to GRU One of the advantages of PyTorch's modular design is the ease of experimentation. The nn.LSTM layer can be replaced with an nn.GRU layer with minimal code changes to compare performance. The

GRU does not return a cell state.

Table: Recurrent Architectures at a Glance

This table provides a concise comparison to guide model selection between the different recurrent architectures.

Day 10: The Transformer Architecture

Objective: To understand the revolutionary Transformer architecture, which underpins most modern state-of-the-art NLP models, and to implement its key components in PyTorch.

Theoretical Knowledge: Attention is All You Need

The introduction of the Transformer architecture in the 2017 paper "Attention Is All You Need" marked a paradigm shift in sequence modeling. It addressed a fundamental bottleneck of RNNs: their sequential nature. Because an RNN must process a sequence token by token, its computations cannot be parallelized across the time dimension, making it slow for very long sequences.

The Transformer's radical idea was to discard recurrence entirely and rely solely on a mechanism called self-attention to draw connections between words in a sequence. In self-attention, to compute the representation for a given word, the model can directly look at and weigh the importance of all other words in the sequence, regardless of their distance. This ability to process all tokens simultaneously allows for massive parallelization on modern hardware like GPUs, which is the key computational property that has enabled the training of enormous models like GPT and BERT.

The key components of the Transformer are :

Scaled Dot-Product Attention: This is the core computational unit. For each input token, three vectors are created: a Query (Q), a Key (K), and a Value (V). The Query represents the current token's "request" for information. It is compared against the Keys of all other tokens in the sequence via a dot product. The result is scaled (divided by the square root of the key dimension, dk, for stability) and passed through a

softmaxfunction to get attention weights. These weights are then used to create a weighted sum of the Value vectors, producing the output for the current token. The formula is: Attention(Q,K,V)=softmax(dkQKT)V.Multi-Head Attention: Instead of performing a single attention calculation, the Transformer runs scaled dot-product attention multiple times in parallel. Each parallel run is called an "attention head." The input Q, K, and V vectors are linearly projected into different subspaces for each head. This allows the model to jointly attend to information from different representational subspaces at different positions, capturing various types of relationships (e.g., syntactic, semantic) simultaneously. The outputs of all heads are then concatenated and linearly projected back to the original dimension.

Positional Encoding: Since the self-attention mechanism is permutation-invariant (it treats the input as a "bag of words"), the model has no inherent sense of word order. To fix this, positional encodings—vectors that represent the position of each token in the sequence—are added to the input word embeddings. These can be learned parameters or fixed sine and cosine functions of different frequencies.

Encoder-Decoder Structure: The original Transformer consists of an encoder stack, which processes the input sequence (e.g., a sentence in German), and a decoder stack, which generates the output sequence token-by-token (e.g., the translation in English). The decoder has an additional cross-attention layer that allows it to attend to the encoder's output.

Supporting Components: Each encoder and decoder layer also contains a position-wise Feed-Forward Network (a simple two-layer MLP applied independently to each position), Residual Connections (shortcut connections that help with gradient flow in deep networks), and Layer Normalization.

Hands-On Implementation: Building a Transformer from Scratch

To truly understand the architecture, it is highly instructive to build its components manually, following the spirit of guides like "The Annotated Transformer" and other from-scratch tutorials.

Step 1: Multi-Head Attention This is the most complex component. The implementation involves defining linear layers for Q, K, and V, and methods for splitting the tensors into multiple heads, performing scaled dot-product attention, and combining the heads back together.

Python

import torch.nn as nn

import math

class MultiHeadAttention(nn.Module):

def __init__(self, d_model, num_heads):

super(MultiHeadAttention, self).__init__()

assert d_model % num_heads == 0, "d_model must be divisible by num_heads"

self.d_model = d_model

self.num_heads = num_heads

self.d_k = d_model // num_heads

self.W_q = nn.Linear(d_model, d_model)

self.W_k = nn.Linear(d_model, d_model)

self.W_v = nn.Linear(d_model, d_model)

self.W_o = nn.Linear(d_model, d_model)

def scaled_dot_product_attention(self, Q, K, V, mask=None):

attn_scores = torch.matmul(Q, K.transpose(-2, -1)) / math.sqrt(self.d_k)

if mask is not None:

attn_scores = attn_scores.masked_fill(mask == 0, -1e9)

attn_probs = torch.softmax(attn_scores, dim=-1)

output = torch.matmul(attn_probs, V)

return output

def split_heads(self, x):

batch_size, seq_length, d_model = x.size()

return x.view(batch_size, seq_length, self.num_heads, self.d_k).transpose(1, 2)

def combine_heads(self, x):

batch_size, _, seq_length, d_k = x.size()

return x.transpose(1, 2).contiguous().view(batch_size, seq_length, self.d_model)

def forward(self, Q, K, V, mask=None):

Q = self.split_heads(self.W_q(Q))

K = self.split_heads(self.W_k(K))

V = self.split_heads(self.W_v(V))

attn_output = self.scaled_dot_product_attention(Q, K, V, mask)

output = self.W_o(self.combine_heads(attn_output))

return output

Step 2-5: Other Components and Final Model Similarly, one would implement the PositionwiseFeedForward network, the PositionalEncoding module, and then combine them into an EncoderLayer. Finally, after understanding the inner workings, the engineer can leverage the highly optimized, built-in PyTorch modules: torch.nn.Transformer, torch.nn.TransformerEncoder, and torch.nn.TransformerEncoderLayer for practical applications. These modules abstract away the manual implementation, providing a robust and efficient way to build Transformer-based models.

Part III: Becoming an Expert Engineer (Days 11-15)

This final week transitions from implementing specific architectures to mastering the art and science of training, optimizing, and deploying them. These are the skills that distinguish a true expert engineer: someone who can not only build a model but also make it performant, robust, and useful in the real world. This phase is about moving from being a model builder to being a solution architect.

Day 11: Advanced Training Techniques

Objective: To move beyond the basic training loop by incorporating techniques that improve convergence speed, stability, and overall model performance, and to establish a systematic process for experimentation.

Theoretical & Hands-On Topics

Training deep neural networks is often a delicate process. A static set of hyperparameters rarely yields optimal results. Advanced techniques are required to guide the training process intelligently.

Learning Rate Schedulers (torch.optim.lr_scheduler) A fixed learning rate is a blunt instrument. At the beginning of training, a larger learning rate can help the model make rapid progress across the loss landscape. However, as training progresses and the model approaches a good minimum, a smaller learning rate is needed to allow for fine-grained adjustments and prevent overshooting the optimal point. Learning rate schedulers automate this dynamic adjustment.

Theory: Explain the rationale behind dynamic learning rates.

Hands-on: Implement and visualize the effects of two common schedulers on the Day 6 CIFAR-10 training script.

StepLR: Decays the learning rate by a factor ofgammaeverystep_sizeepochs. This is a simple, pre-defined schedule.Python

from torch.optim.lr_scheduler import StepLR

optimizer = torch.optim.SGD(model.parameters(), lr=0.1)

scheduler = StepLR(optimizer, step_size=5, gamma=0.1) # Every 5 epochs, lr becomes lr * 0.1

ReduceLROnPlateau: A more dynamic scheduler that reduces the learning rate when a monitored metric, such as validation loss, has stopped improving for a 'patience' number of epochs.Python

from torch.optim.lr_scheduler import ReduceLROnPlateau

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

scheduler = ReduceLROnPlateau(optimizer, 'min', patience=2, factor=0.5) # Reduce LR if val_loss doesn't improve for 2 epochs

# In the validation loop: scheduler.step(val_loss)

Experiment Tracking with TensorBoard Training deep learning models is an empirical science. Simply printing loss values to the console is insufficient for serious experimentation. A systematic approach to tracking metrics, visualizations, and hyperparameters is essential for reproducibility and analysis. TensorBoard is the standard tool for this in the PyTorch ecosystem.

Theory: Introduce TensorBoard as a suite of web applications for inspecting and understanding training runs and graphs.

Hands-on: Integrate TensorBoard logging into the training loop.

Python

from torch.utils.tensorboard import SummaryWriter

# At the start of the script

writer = SummaryWriter('runs/cifar10_experiment_1')

# Inside the training loop (e.g., at the end of each epoch)

writer.add_scalar('Loss/train', train_loss, epoch)

writer.add_scalar('Loss/val', val_loss, epoch)

writer.add_scalar('Accuracy/val', val_acc, epoch)

writer.add_scalar('Learning_Rate', optimizer.param_groups['lr'], epoch)

# To visualize the model graph

# images, labels = next(iter(train_loader))

# writer.add_graph(model, images.to(device))

writer.close()

After running the script, TensorBoard can be launched from the command line (

tensorboard --logdir=runs) to view the interactive dashboards. This transforms training from a "fire-and-forget" process into an observable and reproducible experiment, allowing for direct comparison of different runs and building intuition about how changes (like adding a scheduler) affect the results.

Gradient Clipping In deep networks, particularly RNNs, it's possible for gradients to grow exponentially large during backpropagation, leading to the exploding gradient problem. This causes unstable training and results in NaN values for the loss. Gradient clipping is a simple technique to mitigate this by capping the magnitude (norm) of the gradients before the optimizer step.

Theory: Explain the problem and the solution.

Hands-on: Show where to add the clipping step in the training loop.

Python

# Inside the training loop, after loss.backward() and before optimizer.step()

torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0)

optimizer.step()

Day 12: Systematic Hyperparameter Tuning

Objective: To transition from manual, ad-hoc hyperparameter tuning to systematic, automated optimization using industry-standard libraries, a crucial step for achieving state-of-the-art performance.

Theoretical Knowledge: The Search for Optimal Hyperparameters

The performance of a deep learning model is extremely sensitive to a set of hyperparameters that are set before the training process begins. These include the learning rate, batch size, dropout probability, optimizer choice, and architectural details like the number of layers or neurons. Finding the optimal combination of these values is a challenging search problem.

Common search strategies include :

Manual Search: Relying on intuition and trial-and-error. This is time-consuming and often explores only a narrow, biased region of the search space.

Grid Search: Exhaustively searching through a manually specified subset of the hyperparameter space. It is simple but suffers from the curse of dimensionality, becoming computationally infeasible very quickly.